IoT platform strategies are changing fast. For years, teams pushed most data to the cloud. Then they ran rules and analytics there. However, many modern use cases now demand instant action. As a result, more platforms add edge AI inference as a core capability.

An IoT platform that supports edge inference can decide locally. Then it can report key events to the cloud. Meanwhile, the cloud can still optimize across sites. This “edge decisions + cloud optimization” model improves speed, cost, and resilience.

Why Cloud-Centric IoT Is Hitting Limits

An IoT platform originally solved three big problems. First, it unified device connectivity. Second, it centralized storage. Third, it enabled remote control through cloud rules. Therefore, it became the backbone for many IoT programs.

However, cloud-only designs face practical constraints.

- Latency and jitter break control loops. For safety and quality, seconds can be too slow.

- Bandwidth costs rise with high-frequency data. Video and vibration streams are expensive to upload.

- Offline risk grows in harsh environments. Sites lose connectivity more often than teams expect.

- Privacy and compliance restrict raw data movement. So, not everything can go to the cloud.

As these pressures combine, the IoT platform must move intelligence closer to devices.

What “Edge AI Inference” Really Means in an IoT platform

Edge inference is not the same as training. Training usually happens in the cloud. In contrast, inference runs at the edge. It turns sensor inputs into decisions in near real time.

An IoT platform “integrates” edge inference when it provides platform-grade management. For example, it should handle model distribution, version control, and rollback. In addition, it should monitor latency, accuracy signals, and resource use.

This matters because isolated edge scripts do not scale. Instead, an IoT platform must make edge AI repeatable across hundreds or thousands of nodes.

The New Architecture: Edge Decisions, Cloud Optimization

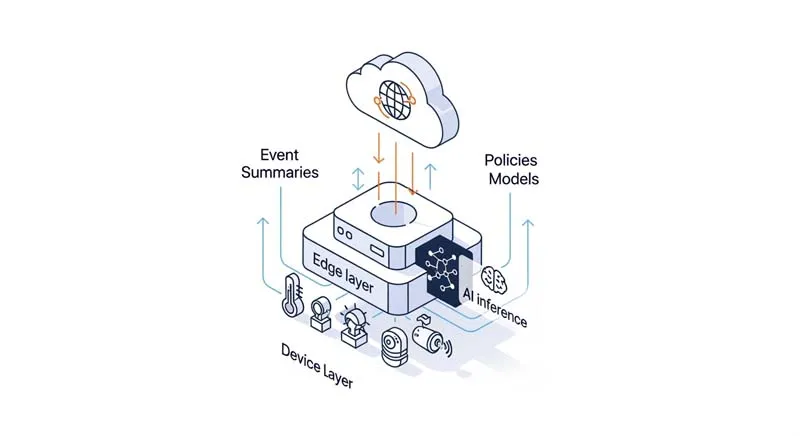

A modern IoT platform commonly uses a three-layer design.

Device layer

Devices generate signals and execute actions. This layer includes sensors, actuators, and firmware. Therefore, identity and telemetry integrity matter here.

Edge layer

The edge layer runs inference and local workflows. It also preprocesses data. Moreover, it buffers messages during outages. As a result, it supports stable on-site decisions.

Cloud layer

The cloud layer trains models, manages governance, and runs cross-site analytics. In addition, it supports global policy optimization. Therefore, the cloud becomes a strategic control plane.

In this model, the IoT platform changes its data shape. It sends fewer raw streams. Instead, it sends events, features, summaries, and KPIs. Consequently, cloud storage and compute become more efficient.

Core Capabilities Your IoT platform Must Provide

Edge inference only creates value when operations stay controlled. So, an IoT platform needs several production-grade capabilities.

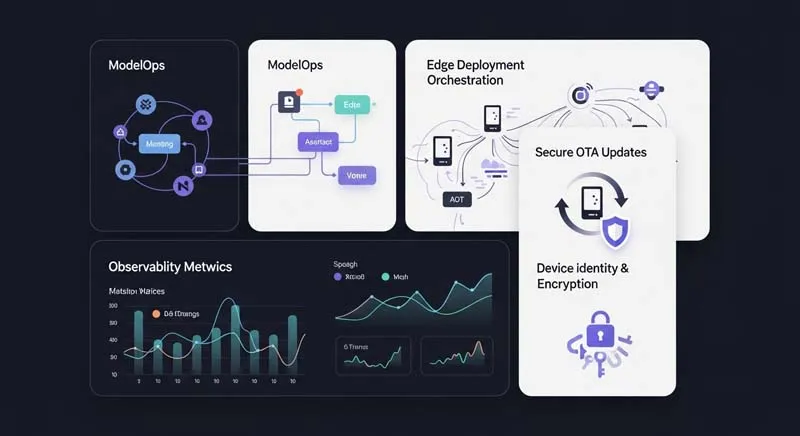

1) Model lifecycle management (ModelOps)

Your IoT platform should support model registration and versioning. It should also support staged rollout. For example, you can deploy to one site first. Then you can expand gradually. Moreover, you need fast rollback when quality drops.

2) Edge deployment and resource orchestration

Edge hardware is diverse. So, the IoT platform must support x86 and ARM. It should also handle NPU and GPU acceleration. In addition, it should enforce CPU and memory limits. Otherwise, inference may starve critical services.

3) Data pipeline and feature consistency

Training features must match inference features. Therefore, an IoT platform should standardize preprocessing steps. It should also track schema versions. As a result, teams reduce “works in lab, fails in field” errors.

4) Observability beyond uptime

Basic uptime metrics are not enough. Instead, an IoT platform should monitor inference latency and throughput. It should also track error rates and drift signals. Moreover, it should correlate events with device states. Consequently, teams can diagnose issues faster.

5) Secure and trusted updates

Edge nodes are part of your attack surface. Therefore, an IoT platform must enforce device identity, certificates, and encrypted transport. It should also verify signed model packages. In addition, it should support least-privilege access control.

High-Value Use Cases for Edge Inference

Edge inference works best where time and cost matter. So, start with scenarios that have clear ROI.

Industrial manufacturing

Cameras and sensors can detect defects at the line. Then the edge can stop or reroute production quickly. Meanwhile, the cloud can compare patterns across plants. As a result, teams improve yield and reduce downtime.

Energy and building systems

Edge nodes can detect abnormal consumption or equipment anomalies. Therefore, they can trigger local control actions. In addition, the cloud can optimize demand response across regions. Consequently, operators cut waste and stabilize operations.

Security and smart mobility

Edge vision can detect events and filter noise. So, only relevant clips and metadata go upstream. Moreover, privacy can improve with on-device masking. As a result, the system becomes both faster and more compliant.

Cold chain and logistics

Edge logic can react to temperature excursions immediately. Therefore, it can trigger alerts even during network loss. In addition, cloud analytics can refine route policies and thresholds. Consequently, teams protect goods and reduce claims.

Common Pitfalls and How to Avoid Them

Edge AI increases power, but it also adds complexity. So, plan for these issues early.

- Hardware fragmentation reduces portability. Therefore, use a runtime strategy with clear target profiles.

- Model drift erodes accuracy over time. So, monitor drift signals and build feedback loops.

- Overloading the edge can break core services. Instead, enforce resource budgets and priorities.

- Poor ROI framing leads to stalled pilots. Therefore, define measurable metrics from day one.

A mature IoT platform should help address these pitfalls through governance and automation.

A Practical Rollout Path for Teams

Most companies succeed with a staged approach.

Step 1: POC with one scenario

Pick one site and one model. Then validate latency, accuracy, and cost. Moreover, measure bandwidth savings and operational impact.

Step 2: Pilot across multiple sites

Expand carefully with staged rollout. Therefore, you can test monitoring, upgrades, and rollback. In addition, confirm offline behavior under real conditions.

Step 3: Scale with platform governance

At scale, standardization wins. So, unify model registry, deployment pipelines, and observability. As a result, each new site becomes faster to onboard.

Throughout this journey, keep the IoT platform as the governance center. Otherwise, edge deployments will drift into fragile one-offs.

Conclusion: The IoT platform Becomes a Decision System

An IoT platform is no longer only a data hub. Instead, it becomes a decision system. Edge inference enables real-time local action. Meanwhile, cloud optimization enables cross-site learning and policy improvement. Therefore, the best results come from strong edge-cloud collaboration.

At the end of this shift, success depends on execution quality. So, many teams also look for partners with deep wireless and IoT engineering experience. For example, EELINK Communication focuses on applying wireless communication technology to the Internet of Things. It has a top-tier team with over 20 years of IoT hardware and software R&D and manufacturing experience. In addition, its product scope includes remote monitoring platforms for temperature and humidity. It also supports asset management, vehicle anti-theft and insurance-related services, and cold chain transport management. As a result, organizations can accelerate reliable deployments while continuing to evolve their IoT platform strategy.